Anyone that’s tried using SSAS as a data source (whether in an ETL tool or a data dump using TSQL openrowset and/or linked servers) might have noticed that longer queries seem to run for twice as long when compared to MDX executed directly against the cube in SSMS.

The reason for this is the database engine and SSIS need to know the ‘shape’ of the dataset. This is fine for most datasets because a relational query has its schema defined by the underlying data and a ‘get schema’ command to the data source returns the dataset metadata only.

The nature of mdx is such that the schema of the resultset could be driven by the data itself (eg NON EMPTY could result in columns being excluded) and is not known until the query is returned. So when the SSAS server gets a connection and query that’s only requesting the metadata it executes the query (to define the metadata) and returns that. A split second later the command for the data comes in and SSAS obliges and runs the query again.

Using a linked server or SSIS (with an oledb datasource pointing to an SSAS cube) to execute a query against SSAS results in the query running twice. Once to return the metadata header and again to return the data.

From the Profiler:

This is totally unnecessary for SSIS because the data flow already has the schema defined, and if the returned dataset is different then the Data Flow Task will fail during execution anyway. Setting Delay Validation = true and ValidateExternalMetadata=false makes no difference. The MDX query is still executed twice.

In most cases this is fine as queries are answered fairly quickly, and caching etc would probably help with the same query running in quick succession. But in some cases (like mine! - when the query is a very complex calculation driven leaf level query that can take minutes to hours to execute), the query taking twice as long just isn’t going to cut it.

Connecting to SSAS

There are two ways to connect to SSAS from SSIS using the built in data flow source connectors - OLEDB and ADO.NET

What’s Actually Happening?

OLEDB

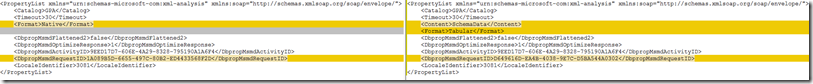

The ‘Query Start’ event of SSIS executing a query against SSAS has some interesting additional properties. Comparing the two query executions (metadata and data) shows these differences:

<Content>MetaData</Content> and <ExecutionMode>Prepare</ExecutionMode> are the culprits here.

Is there any way to stop this first one from running or forcing it to behave like the second one (so that it also returns the data)? No, none that I could find :(

I tried to use the Extended Properties area of the connection in SSIS to modify these two properties but had no luck. Setting Content to SchemaData resulted in an error because data isn’t compatible with execution mode prepare. There is no doco on these two connection properties anywhere and I’m not a good enough coder to dive into the DLLs to figure out what the valid options are.

Maybe ADO.NET will work better?

ADO.NET

The slower and less functional cousin of OLEDB (no fast load, native query from variable support) also suffers from firing the same query twice. Probably because it’s simply the same OLEDB SSAS driver wrapped up in ADO.NET (whatever that means!)

It however sends slightly different properties:

Notice how the metadata query is has fewer properties. The data query adds on <Content>SchemaData</Content> and <Format>Tabular</Format>.

Could simply adding Content=SchemaData and Format=Tabular to the extended properties of the ADO.NET connection be enough?

Yes! It is!

Conclusion

Unfortunately the easier to use OLEDB data flow connection double dips when extracting data. ADO.NET is the only other option if time is a factor.

Simply add the following to your ADO.NET Connection Manager under All|Advanced|Extended Properties to stop it from querying the cube twice

Content=SchemaData;Format=Tabular

Enjoy!